Docker Compose

Introduction to Compose

Compose is a tool for defining and running multi-container Docker applications. With Compose, you can use a YML file to configure all the services your application needs. Then, using a single command, you can create and start all services from the YML file configuration.

Compose uses three steps.

- Use Dockerfile to define the application’s environment.

- Use docker-compose.yml to define the services that make up the application so that they can run together in an isolated environment.

- Finally, execute the docker-compose up command to start and run the entire application.

An example configuration of docker-compose.yml is as follows (see below for configuration parameters)

# yaml Configuration Example

version: '3'

services:

web:

build: .

ports:

- "5000:5000"

volumes:

- .:/code

- logvolume01:/var/log

links:

- redis

redis:

image: redis

volumes:

logvolume01: {}Compose installation

On Linux we can download its binary package from Github to use, the latest release is available at https://github.com/docker/compose/releases.

Run the following command to download the current stable version of Docker Compose.

$ sudo curl -L "https://github.com/docker/compose/releases/download/v2.2.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/ docker-composeTo install another version of Compose, replace v2.2.2.

Docker Compose is stored on GitHub and is not very stable.

You can also install Docker Compose at high speed by running the following command.

curl -L https://get.daocloud.io/docker/compose/releases/download/v2.4.1/docker-compose-uname -s-uname -m> /usr/local/bin/docker- compose

To apply executable permissions to a binary file.

$ sudo chmod +x /usr/local/bin/docker-composeCreate a softlink.

$ sudo ln -s /usr/local/bin/docker-compose /usr/bin/docker-composeTo test if the installation was successful.

$ docker-compose version

cker-compose version 1.24.1, build 4667896bNote: For alpine, the following dependencies are required: py-pip, python-dev, libffi-dev, openssl-dev, gcc, libc-dev, and make.

macOS

The Docker Desktop and Docker Toolbox for Mac already include Compose and other Docker applications, so Mac users do not need to install Compose separately.

windows PC

The Docker Desktop and Docker Toolbox for Windows already include Compose and other Docker applications, so Windows users do not need to install Compose separately.

Usage

1. Preparation

Create a test directory.

$ mkdir composetest

$ cd composetestCreate a file called app.py in the test directory and copy and paste the following.

import time

import redis

from flask import Flask

app = Flask(__name__)

cache = redis.Redis(host='redis', port=6379)

def get_hit_count():

retries = 5

while True:

try:

return cache.incr('hits')

except redis.exceptions.ConnectionError as exc:

if retries == 0:

raise exc

retries -= 1

time.sleep(0.5)

@app.route('/')

def hello():

count = get_hit_count()

return 'Hello World! I have been seen {} times.\n'.format(count)In this example, redis is the host name of the redis container on the application network, which uses port 6379.

Create another file in the composetest directory called requirements.txt with the following contents.

flask

redis2. Create the Dockerfile file

In the composetest directory, create a file named Dockerfile with the following contents.

FROM python:3.7-alpine

WORKDIR /code

ENV FLASK_APP app.py

ENV FLASK_RUN_HOST 0.0.0.0

RUN apk add --no-cache gcc musl-dev linux-headers

COPY requirements.txt requirements.txt

RUN pip install -r requirements.txt

COPY . .

CMD ["flask", "run"]Dockerfile contents explained:

-

FROM python:3.7-alpine: Start building the image from a Python 3.7 image.

-

WORKDIR /code: Set the working directory to /code.

-

ENV FLASK_APP app.py ENV FLASK_RUN_HOST 0.0.0.0Set the environment variables used by the flask command.

-

RUN apk add –no-cache gcc musl-dev linux-headers: Install gcc so that Python packages such as MarkupSafe and SQLAlchemy can be compiled faster.

-

COPY requirements.txt requirements.txt RUN pip install -r requirements.txtCopy requirements.txt and install Python dependencies.

-

_COPY . . : Copies the . project to the current directory in the . working directory in the image.

-

CMD ["flask", "run"]: The container provides the default execution command as: flask run.

3. Create docker-compose.yml

Create a file named docker-compose.yml in the test directory, and paste the following.

# yaml Configuration

version: '3'

services:

web:

build: .

ports:

- "5000:5000"

redis:

image: "redis:alpine"This Compose file defines two services: web and redis.

- web: This web service uses the image built from the current directory of Dockerfile. This sample service uses the default port 5000 of the Flask web server.

- redis: This redis service uses the public Redis image from Docker Hub.

4. Build and run your application using the Compose command

In the test directory, execute the following command to start the application.

docker-compose upIf you want to execute the service in the background you can add the -d parameter.

docker-compose up -dyml configuration command reference

version

Specifies which version of compose this yml depends on.

build

Specifies the build image context path.

For example, for the webapp service, specify as the image to be built from the context path . /dir/Dockerfile.

version: "3.7"

services:

webapp:

build: . /dirOr, as an object with a path specified in the context, and optionally a Dockerfile and args.

version: "3.7"

services:

webapp:

build:

context: . /dir

dockerfile: dockerfile-alternate

args:

buildno: 1

labels:

- "com.example.description=Accounting webapp"

- "com.example.department=Finance"

- "com.example.labels-with-empty-value"

target: prod- context: context path.

- dockerfile: specify the name of the Dockerfile file where the image will be built.

- args: add build parameters, which are environment variables that can only be accessed during the build process.

- labels: set the labels of the build image.

- target: multi-layer build, you can specify which layer to build.

cap_add,cap_drop

Adds or removes kernel functions of the host owned by the container.

cap_add:

- ALL # Enables all privileges

cap_drop:

- SYS_PTRACE # Turn off ptrace privilegescgroup_parent

Specifying a parent cgroup group for a container means that it will inherit the resource limits of that group.

cgroup_parent: m-executor-abcdcommand

Override the default command for container startup.

command: ["bundle", "exec", "thin", "-p", "3000"]container_name

Specify a custom container name instead of the generated default name.

container_name: my-web-containerdepends_on

Set dependencies.

- docker-compose up : Starts services in dependency order. In the following example, db and redis are started first before web is started.

- docker-compose up SERVICE : Automatically includes dependencies for SERVICE. In the following example, docker-compose up web will also create and start db and redis.

- docker-compose stop : Stops the services in dependency order. In the following example, web is stopped before db and redis.

version: "3.7"

services:

web:

build: .

depends_on:

- db

- redis

redis:

image: redis

db:

image: postgresNote: The web service will not wait for the redis db to fully start before starting.

deploy

Specifies the configuration related to the deployment and operation of the service. Useful only in swarm mode.

version: "3.7"

services:

redis:

image: redis:alpine

deploy:

mode:replicated

replicas: 6

endpoint_mode: dnsrr

labels:

description: "This redis service label"

resources:

limits:

cpus: '0.50'

memory: 50M

reservations:

cpus: '0.25'

memory: 20M

restart_policy:

condition: on-failure

delay: 5s

max_attempts: 3

window: 120sOptional parameters.

endpoint_mode: the way to access the cluster service.

endpoint_mode: vip

# Docker Cluster Service an external virtual ip. All requests will reach the machines inside the cluster service via this virtual ip.

endpoint_mode: dnsrr

# DNS polling (DNSRR). All requests are automatically polled for an ip address in the cluster ip list.labels: Set labels on the service. You can override the labels under deploy with the labels on the container (the same level of configuration as deploy).

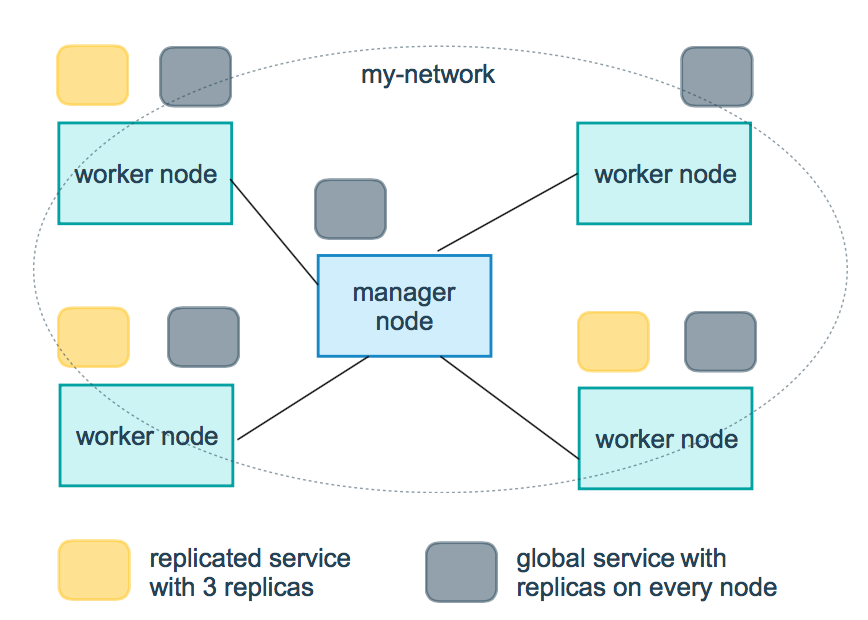

mode: Specify the mode of service provisioning.

-

replicated: replicates the service, replicates the specified service to the machines in the cluster.

-

global: global service, the service will be deployed to each node of the cluster.

-

Illustration: The yellow square in the figure below shows the operation of replicated mode, and the gray square shows the operation of global mode.

replicas: mode is replicated, you need to use this parameter to configure the exact number of nodes to run.

resources: Configure limits on server resource usage, such as the above example, to configure the percentage of cpu and memory required for the redis cluster to run. Avoid exceptions if the resource usage is too high.

restart_policy: Configure how to restart the container when it is exited.

- condition: optionally none, on-failure or any (default: any).

- delay: set how long to restart after (default: 0).

- max_attempts: the number of attempts to restart the container, beyond that, no more attempts will be made (default: keep retrying).

- window: set the container restart timeout (default: 0).

rollback_config: configure how the service should be rolled back in case of update failure.

- parallelism: the number of containers to be rolled back at a time. If set to 0, all containers will be rolled back at the same time.

- delay: the time to wait between rollbacks for each container group (default is 0s).

- failure_action: what to do if the rollback fails. One of continue or pause (default pause).

- monitor: the time (ns|us|ms|s|m|h) after each container update to continuously watch if it fails (default is 0s).

- max_failure_ratio: the failure rate that can be tolerated during the rollback (default is 0).

- order: the order of operations during the rollback. Either stop-first (serial rollback), or start-first (parallel rollback) (default stop-first).

update_config: configure how the service should be updated, useful for configuring rolling updates.

- parallelism: the number of containers to be updated at once.

- delay: the time to wait between updating a set of containers.

- failure_action: what to do if the update fails. One of continue, rollback or pause (default: pause).

- monitor: the time (ns|us|ms|s|m|h) to keep watching for failure after each container update (default is 0s).

- max_failure_ratio: the failure rate that can be tolerated during the update.

- order: the order of operations during the rollback. One stop-first (serial rollback), or start-first (parallel rollback) (default stop-first).

Note: Only supported for V3.4 and higher.

devices

Specifies the device mapping list.

devices:

- "/dev/ttyUSB0:/dev/ttyUSB0"dns

Customize the DNS server, either as a single value or as a list of multiple values.

dns: 8.8.8.8

dns:

- 8.8.8.8

- 9.9.9.9dns_search

Custom DNS search domain. Can be a single value or a list.

dns_search: example.com

dns_search:

- dc1.example.com

- dc2.example.comentrypoint

Override the container’s default entrypoint.

entrypoint: /code/entrypoint.shIt can also be in the following format.

entrypoint:

- php

-d

- zend_extension=/usr/local/lib/php/extensions/no-debug-non-zts-20100525/xdebug.so

-d

- memory_limit=-1

- vendor/bin/phpunitenv_file

Add environment variables from a file. Can be a single value or a list of multiple values.

env_file: .envCan also be in list format.

env_file:

- . /common.env

- . /apps/web.env

- /opt/secrets.envenvironment

Add environment variables. You can use arrays or dictionaries, any boolean value, and boolean values need to be enclosed in quotes to ensure that the YML parser will not convert them to True or False.

environment:

RACK_ENV: development

SHOW: 'true'expose

Exposes the port, but does not map it to the host, and is only accessed by the connected service.

Only internal ports can be specified as parameters.

expose:

- "3000"

- "8000"extra_hosts

Add a hostname mapping. Similar to docker client –add-host.

extra_hosts:

- "somehost:162.242.195.82"

- "otherhost:50.31.209.229"The above will create a mapping relationship with an ip address and hostname in the internal container /etc/hosts for this service.

162.242.195.82 somehost

50.31.209.229 otherhosthealthcheck

Check if the docker service is running healthily.

healthcheck:

test: ["CMD", "curl", "-f", "http://localhost"] # Set the testing procedure

interval: 1m30s # Set the detection interval

timeout: 10s # Set detection timeout

retries: 3 # Set the number of retries

start_period: 40s # how many seconds to start the detection process after startupimage

Specifies the image the container is running on. All of the following formats are possible.

image: redis

image: ubuntu:14.04

image: tutum/influxdb

image: example-registry.com:4000/postgresql

image: a4bc65fd # mirror idlogging

The logging configuration for the service.

driver: Specify the logging driver for the service container, the default value is json-file. there are three options

driver: "json-file"

driver: "syslog"

driver: "none"Under the json-file driver only, the following parameters can be used to limit the number and size of logs.

logging:

driver: json-file

options:

max-size: "200k" # single file size of 200k

max-file: "10" # maximum 10 filesWhen the file limit is reached, the old files will be deleted automatically.

Under the syslog driver, you can use syslog-address to specify the log receiving address.

logging:

driver: syslog

options:

syslog-address: "tcp://192.168.0.42:123"network_mode

Set the network mode.

network_mode: "bridge"

network_mode: "host"

network_mode: "none"

network_mode: "service:[service name]"

network_mode: "container:[container name/id]"networks

Configure the network to which the container is connected, referencing the entry under top-level networks .

services:

some-services:

networks:

some-network:

aliases:

- alias1

other-network:

aliases:

- alias2

networks:

some-network:

# Use a custom driver

driver: custom-driver-1

other-network:

# Use a custom driver which takes special options

driver: custom-driver-2aliases : Other containers on the same network can use the service name or this alias to connect to the service of the corresponding container.

restart

- no: is the default restart policy, the container will not be restarted under any circumstances.

- always: the container is always restarted.

- on-failure: restart the container only when the container exits abnormally (exit status is non-zero).

- unless-stopped: always restart the container when it exits, but disregard containers that were stopped when the Docker daemon was started

restart: "no"

restart: always

restart: on-failure

restart: unless-stoppedNote: swarm cluster mode, please use restart_policy instead.

secrets

Store sensitive data, such as passwords.

version: "3.1"

services:

mysql:

image: mysql

environment:

MYSQL_ROOT_PASSWORD_FILE: /run/secrets/my_secret

secrets:

- my_secret

secrets:

my_secret:

file: . /my_secret.txtsecurity_opt

Modify the container’s default schema tag.

security-opt.

- label:user:USER # Set the container's user label

- label:role:ROLE # Set the container's role label

- label:type:TYPE # Set the security policy label for the container

- label:level:LEVEL # Set the container's security level labelstop_grace_period

Specifies how long to wait before sending a SIGKILL signal to shut down the container if it cannot handle SIGTERM (or any stop_signal for that matter).

stop_grace_period: 1s # wait 1 second

stop_grace_period: 1m30s # wait 1 minute 30 seconds The default wait time is 10 seconds.

stop_signal

Set an alternative signal for stopping the container. SIGTERM is used by default.

In the following example, SIGUSR1 is used instead of SIGTERM to stop the container.

stop_signal: SIGUSR1sysctls

Set the kernel parameters in the container, either in array or dictionary format.

sysctls:

net.core.somaxconn: 1024

net.ipv4.tcp_syncookies: 0

sysctls:

- net.core.somaxconn=1024

- net.ipv4.tcp_syncookies=0tmpfs

Installs a temporary filesystem inside the container. Can be a single value or a list of multiple values.

tmpfs: /run

tmpfs:

- /run

- /tmpulimits

Override the container’s default ulimit.

ulimits:

nproc: 65535

nofile:

soft: 20000

hard: 40000volumes

Mount the host’s data volumes or files to the container.

version: "3.7"

services:

db:

image: postgres:latest

volumes:

- "/localhost/postgres.sock:/var/run/postgres/postgres.sock"

- "/localhost/data:/var/lib/postgresql/data" ApiDemos™

ApiDemos™